Kurtosis - how silent is it about "peakedness"?

Westfall offers evidence for "stum". I'm not convinced. Here's why.

Introduction

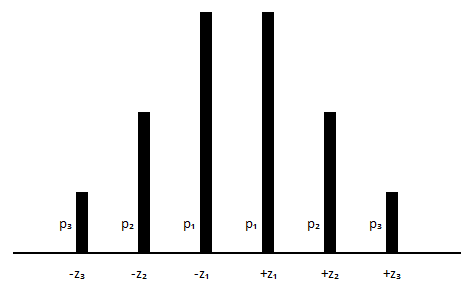

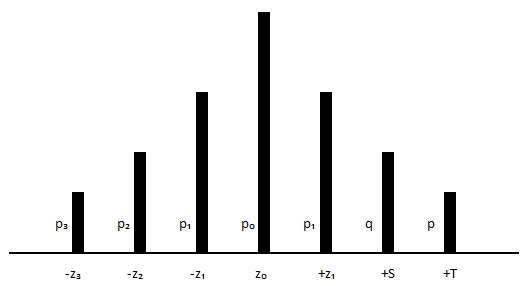

I believe it to be - at best - limited, but let’s pretend it’s possible to learn (in the case of unimodal symmetric distributions) something about kurtosis from discrete distributions with a limited number of values. In my opinion, the minimum number of values needed to be able to say something useful is five or six - but I’d prefer more. The case of a five-value distribution is dealt with here. In the case of a six-value distribution, the requirements for symmetry and unimodality imply that in such a case one is confronted by six variables. (Strictly speaking, as the graph below shows, a discrete distribution with an even number of values has two modes.)

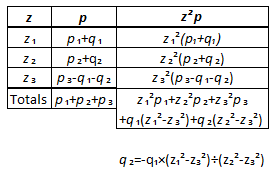

The three values of the distribution are (z₁, z₂, z₃) and the three probabilities are (p₁, p₂, p₃) for those values. These parameters are restricted by the requirements z₃ > z₂ > z₁ > 0 and p₁ ≽ p₂ ≽ p₃ > 0, with the exception that if one pair of these z-values is equal, all pairs are equal; z₁ = z₂ = z₃. Also, note that if all six values are the same, p₃ = ⅙, establishing an upper bound on this value. Next, looking at the middle four z-values, as p₃ ⭢ 0 the biggest p₂ can become is ¼, and, by the same argument, the maximum possible value for p₁ is ½. In summary, ⅙ ≼ p₁ ≼ ½, ⅙ ≼ p₂ ≼ ¼ and 0 < p₃ ≼ ⅙.

An immediately evident shortcoming of this family of distributions is that the central pair suggests a bias for platykurtism - one need only consider what impression would be created if one displayed a sequence of diagrams with p₂ ⭢ p₁. However, an example with 7 values (in general, an odd number of values) is hardly better - one is now open to the accusation of bias towards leptokurtism.

Again, imagine a sequence of diagrams with p₂ ⭢ p₁. In my opinion, limited representations such as the examples above should be used with extreme caution. I tried to do this in a previous post in which I explored a five-value discrete distribution. The postage-stamp summary is: I showed that the numerical value of the kurtosis is mainly determined by the “tailedness” - the parameter M in that exploration - but the shape of the distribution (a phrase I prefer to the word “peakedness”) should not be discounted (as expressed by the parameters m and k in that investigation).

The fact to appreciate is that whatever investigation one does of kurtosis, one would prefer it to be expressed in terms of three parameters, with the third determining the value of the kurtosis. Let’s dig into that idea.

Characterising kurtosis

The counter examples in this link are designed to accommodate two facts about 6-value symmetric discrete distributions, namely, firstly, that

p₃ + p₂ + p₁ + p₁ + p₂ + p₃ = 2(p₁ + p₂ + p₃) = 1.

Secondly, the kurtosis of a variable x is the fourth standardized moment of x which means that given x, converting x into y = [x - μ] (centring x) and then into z = [x - μ]÷σ = y÷σ (standardizing x) where μ = E(x) (the first moment of x) and σ² =Var(x) = E[(x - μ)²] is the second central moment of x, Kurt(x) = E[z⁴] = E[(y÷σ)⁴] = E[y⁴÷{(σ²)²}] = E[(x - μ)⁴÷(σ²)²] = E[{(x - μ)÷σ}⁴] will be avoided in calculations by requiring E[x] = 0 and E[x²] = 1. Since the first is implied by the requirement for a symmetric distribution, we are left with

E[x²] = E[z²] = 2[p₁z₁² + p₂z₂² + p₃z₃²] = 1 for the current family of distributions.

The first restriction would allow one to restrict solutions to the value of p₃ and the second would allow one to restrict solutions to the value of z₃. Instead of the original 6 unknowns, we now have 4. Our interest is in knowing the effect of choice for those four values on the kurtosis,

E[z⁴] = 2[p₁z₁⁴ + p₂z₂⁴ + p₃z₃⁴],

so, if we write K for the value of the kurtosis, that value could be expressed in terms of four variables. One way to do this is to introduce the variables M and m used in the case of the five value discrete distribution investigated in an earlier post. Let p₂ = mp₃ and z₃ = Mz₂. Then p₁ ≽ p₂ ≽ p₃ > 0 leads to p₁ ≽ mp₃ ≽ p₃ > 0 which simplifies to m≽1 while z₃ > z₂ > z₁ > 0 leads to Mz₂ > z₂ > z₁ > 0 which simplifies to M>1.

If we re-parametrise, replacing {p₁, p₂, z₁, z₂} with {p₁, z₁, m, M} (restricted by M>1, m≽1) we have

p₃ = (1-2p₁)÷[2(1+m)] and p₂ = m(1-2p₁)÷[2(1+m)], while

z₂² = [(1-2p₁z₁²)(1+m)]÷[(1-2p₁)(M²+m)] and z₃² = M²[(1-2p₁z₁²)(1+m)]÷[(1-2p₁)(M²+m)]

and hence

E[z⁴] = 2p₁z₁⁴ + [(1-2p₁z₁²)²÷(1-2p₁)]·[(M⁴+m)(1+m)÷(M²+m)²]

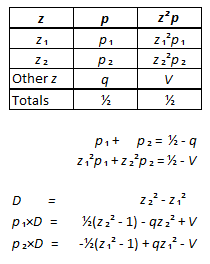

The formulas derived above are informative. First, (writing K for the value calculated for the kurtosis) look at the sequence of formulas (two of which are restrictions) the system is built on:

p₁ + p₂ + p₃ = ½

p₁z₁² + p₂z₂² + p₃z₃² = ½

p₁z₁⁴ + p₂z₂⁴ + p₃z₃⁴ = ½K

It is immediately obvious that the two restrictions (the first two equations) can only be satisfied by (at least) z₁<1. At some point, continued increase of z₃ (increased tailedness) would force z₂<1. In my opinion, this should not be allowed. For this toy distribution to be useful, the restriction z₂ > 1 should apply. Indeed since z₂ is to represent the shoulder of the distribution, it might make sense to restrict the shoulder value to between one and (say) two standard deviations. Such a restriction would limit the combinations of m and M which would be allowed.

Turning to the formula derived for the kurtosis, notice that it’s in the form

K = f(p₁,z₁) + g(p₁,z₁)·h(M,m), with ⅙ ≼ p₁ < ½ and 0 < z₁ < 1.

We see that 0 < f(p₁,z₁) < 1 i.e. 0 < 2p₁z₁⁴ < 1 i.e. that the first term makes little contribution to K. In the case of g(p₁,z₁) the division with 1-2p₁ implies that the most we can say is that g(p₁,z₁) > 0. However, we can make some sense of the final term in the formula for the kurtosis if we limit ourselves to cases where g(p₁,z₁) = 1. Cases satisfying this constraint vary between z₁ = 0.741964 when p₁ = ⅙ and z₁ ⭢ 1 as p₁ ⭢ ½. For these solutions, the first term, f(p₁,z₁), varies between 0.101021 and 1, i.e at most the kurtosis will be 1+h(M,m) when z₁ and p₁ have been chosen to yield g(p₁,z₁) = 1.

The factor of the second term of the kurtosis, h(M,m), = (1+m)(M⁴+m)÷(M²+m)², is informative. If values have been chosen for z₁ and p₁ then if we specify a value for the kurtosis, K, we can solve for h(M,m):

(1+m)(M⁴+m)÷(M²+m)² = [K - 2p₁z₁⁴](1-2p₁)÷(1-2p₁z₁²)²

One can then explore what combinations of M and m yielding the calculated value for the factor. It is essential to understand that m operates on the probability values (p) of the distribution and the M operates on the values (z) of the distribution and that the kurtosis is determined by a combination of m and M. Selecting p-values and gloating “look, only z-values change” or selecting z-values and gloating “look, only p-values change” is unhelpful!

Constructing valid distributions in this family

This link offers two methods of construction. The first selects fixed values for z₁, p₁, z₂ and allows for a variable θ which generates the family. Keeping z₂ as a variable, z, the distributions are specified by:

z₁ = 0.5 with p₁ = 0.25

z₂ = z with p₂ = 0.25 - θ÷2

z₃² = (1-2p₁z₁² - ½z²)÷θ + z² with p₃ = θ÷2 (using the positive root)

along with three negative z-values with the same p-value as the positive z-value.

After selecting a value for θ, the kurtosis is

K = 2p₁z₁⁴ - ½z⁴ + 2z²(1-2p₁z₁²) + (1-2p₁z₁² - ½z²)÷θ

or, if a value for K is specified,

θ = (1-2p₁z₁² - ½z²)÷[K -2p₁z₁⁴ + ½z⁴ - 2z²(1-2p₁z₁²)].

The second method has (again, using the positive roots for the z values)

z₁² = θ with p₁ = θ÷2

z₂² = 2θ with p₂ = (1 - θ)÷4

z₃² = 2 with p₃ = (1 - θ)÷4

with each z²-value representing a pair of positive and negative z-values, each with the stated probability. (This method traces to Counterexample 2 in the link. The counterexample is discussed further in Appendix A.)

A third method is to select values for z₁, p₁, p₂ (so that one can immediately solve for p₃ = ½ - p₁ - p₂ and then calculate m = p₂÷p₃, i.e. p₂ = mp₃). Setting z₂² = C - F and z₃² = C + mF the restriction on the variance,

½ = p₁z₁² + p₂z₂² + p₃z₃² = p₁z₁² + p₂(C - F) + p₃(C + mF) = p₁z₁² + p₂C - p₂F + p₃C + mp₃F,

implies that C = (1-2p₁z₁²)÷(1-2p₁). The variable F is the equivalent of θ in the first method of construction. After selecting a value for F (and hence values of z₂ and z₃) one may calculate

K = 2[p₁z₁⁴ + p₂z₂⁴ + p₃z₃⁴] = 2[p₁z₁⁴ + p₂(C - F)² + p₃(C + mF)²]

= 2p₁z₁⁴ + (1-2p₁z₁²)²÷(1-2p₁) + 2(1+m)p₂F²

or, if a specific value if K is required, one can solve for F:

F² = [K - 2p₁z₁⁴ - (1-2p₁z₁²)²÷(1-2p₁)]÷[2(1+m)p₂].

Illustrative examples

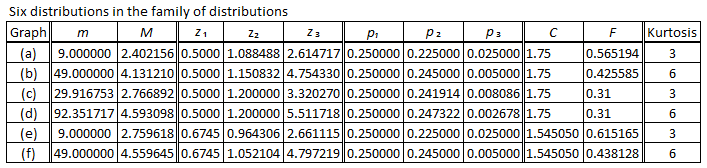

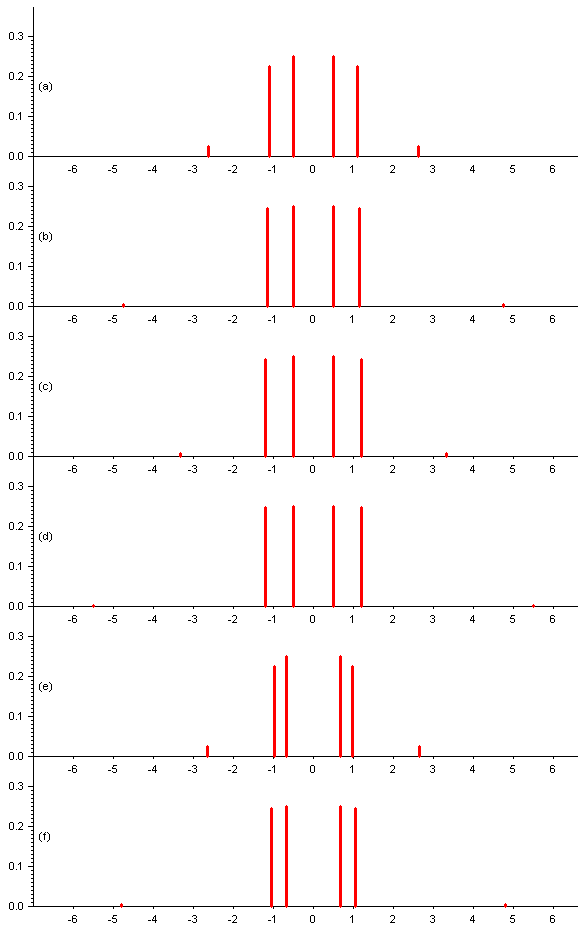

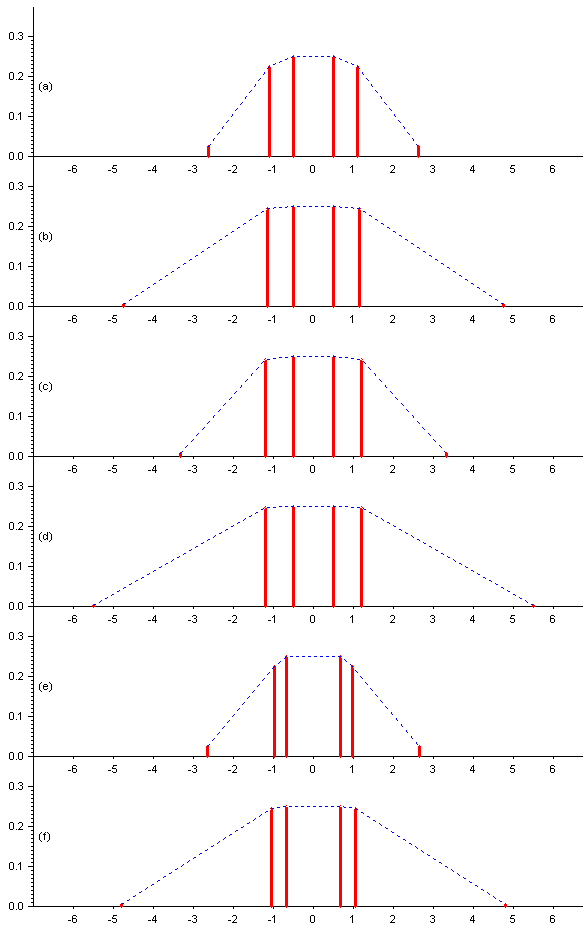

Graphing selected cases will be informative. First, to select a case similar to the standard used in evaluating kurtosis, the Gaussian (a.k.a. Normal) distribution, graph (a) is the first example in the table - and in the graphs - below. Along with K = 3 (the value for the Gaussian distribution), it has z₁ = 0.5, p₁ = 0.25 and p₃ = 0.025 (in an attempt to position z₃ at what might possible consider the boundary of the tails). This is an an attempt to display a mesokurtic distribution from the family concerned.

The next graph, (b), has z₁ = 0.5, p₁ = 0.25 and p₃ = 0.005 but K = 6, in an attempt to display a leptokurtic distribution from the family concerned.

The values chosen for z₁ and p₁ in the next two distributions match the choices made in “Counterexample 1” in this link, which goes on to select z₂ = 1.2 and we made the selections K = 3 and K = 6 to obtain graphs (c) and (d), below.

The final pair selects z₁ based on the fact that, for the standardized Gaussian distribution, the probability that z is between -z₁ and +z₁ is 0.25 when z₁ = 0.674490, which I rounded to four decimal places. Then p₃ = 0.025, K = 3 was selected for graph (e) and p₃ = 0.005, K = 6 for graph (f). All six graphs have p₁ = 0.25.

It seems obvious that this family of distributions is a very limited tool for explaining kurtosis. Mathematically correct, it describes a family of distributions where fixing the centre to contain half the distribution limits the potential of the remaining variables of the family to represent various shapes of distribution. Distributions (a), (c) and (e) are supposed to display mesokurtism (K = 3). Perhaps. If you say so. However, distributions (b), (d) and (e) are supposed to display lepokurtism (K = 6, i.e. > 3). The failure to do so is a consequence of fixing the the centre and is not a consequence of some inability of kurtosis as a measure. Notice what increasing K did. If z₂ is kept constant - set equal to 1.2, as in graphs (c) and (d) - then doubling (increasing) K is achieved by moving z₃ further from the centre. This changes the value of the variance, so, to bring that back to unity, one must adjust (decrease) p₃ which must be matched by a matching adjustment (increase) to p₂ but this adjustment is small, - 0.0054 (to four decimal places), for graphs (c) and (d), so it is difficult to see in the graphs above - but the increase in z₃ is certainly visible and this changes the shape of the distribution. To emphasis this point, I’ve redrawn the graphs above, added a dotted line connecting the tops of the lines representing the probabilities. Please view these graphs thinking “3, 6, 3, 6, 3, 6” and questioning, what do these values suggest to me?

The change in shape is assuredly almost entirely due to the increased tailedness - but comparing (b) to (a) or (f) to (e) shows that even in this limited family, there is a change to the shape of the distribution in the shoulders of the distribution when K increases, despite the centres being held constant.

What of Westfall’s key examples?

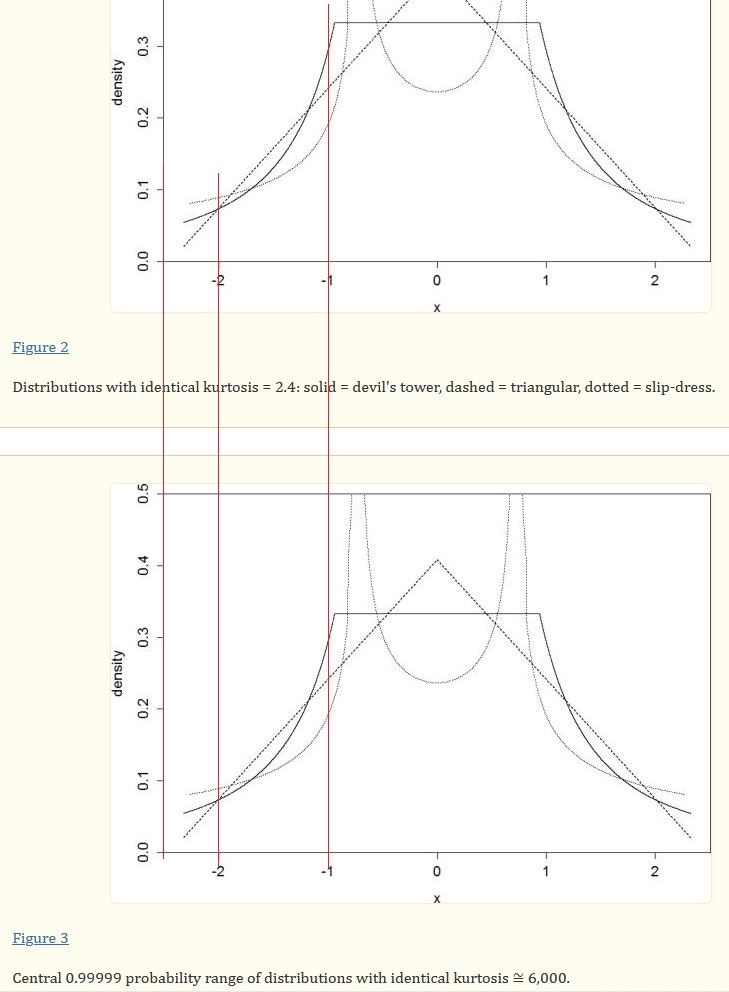

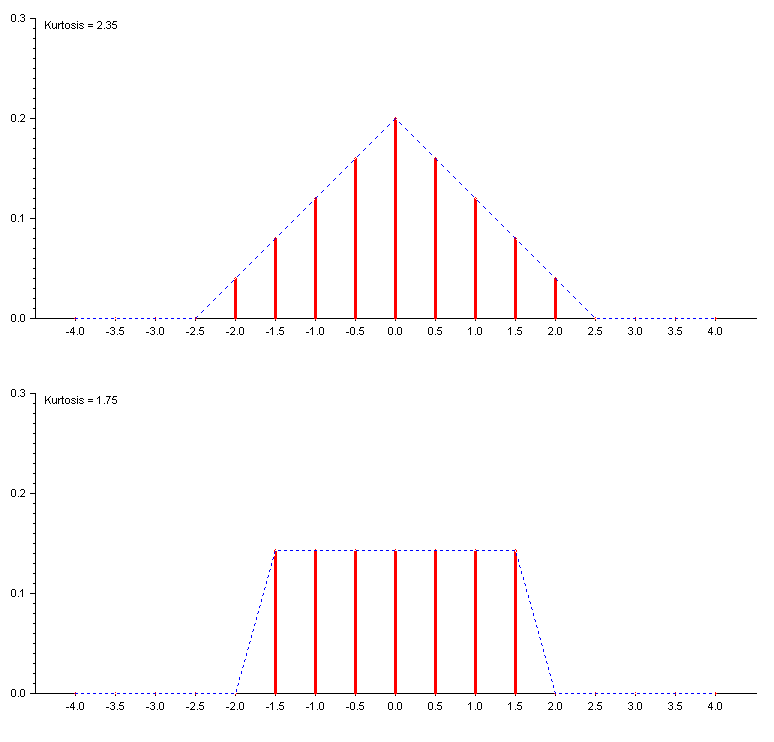

In Figures 2 and 3 of his article, Westfall shows three distributions. In Figure 2 the distributions have kurtosis values of 2.4 while, in Figure, 3 the distributions have kurtosis values in the region of 6000. There can be no doubt, at the level of resolution used, the two sets of three distributions are indistinguishable. I’ve added vertical lines to Westfall’s graphs below, for your convenience.

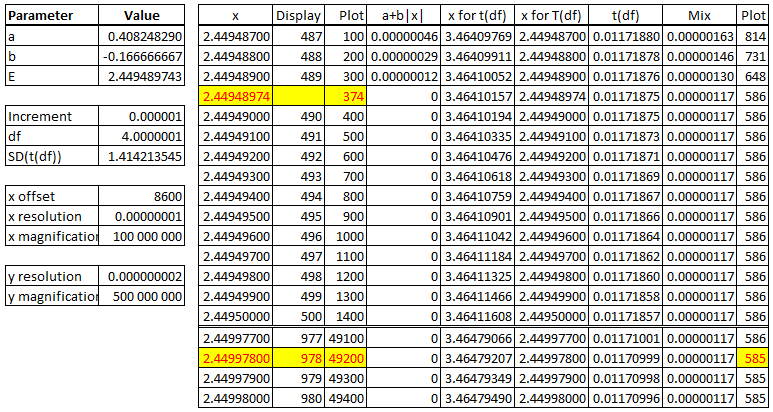

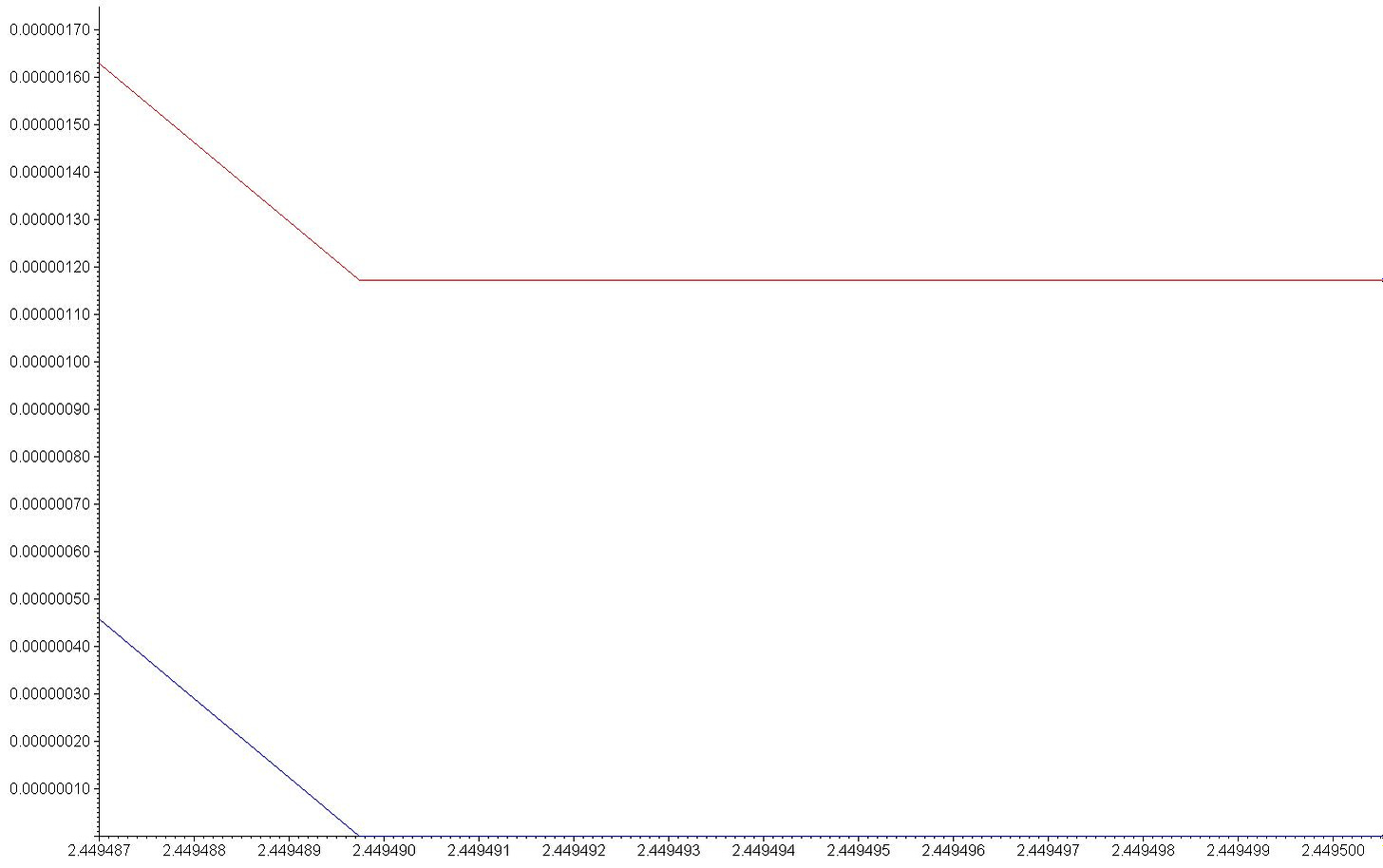

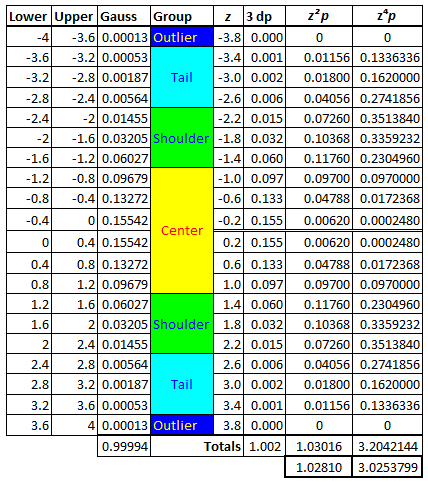

How is this possible? Concentrating on the triangular distributions in the two Figures, how can two distributions be indistinguishable but have such vastly different values for the kurtosis? To answer the question, it is instructive to magnify Westfall’s figures in the area where the triangular distribution meets the x-axis at x = √6 ~ 2.45. (I’m using the symbol x in this section, instead of z, for a standardized variable, to match Westfall’s graphs.) The calculations are shown in the table below. The first column, “x”, are the values plotted on the horizontal axis of the graph below the table. The triangular distribution (fourth column) is plotted in blue (three non-zero values followed by a string of zeroes) and the “triangular” distribution in Westfall’s Figure 3 (the second-last column) is plotted in red; the last column is the pixel value for this plot. The pixel values for the horizontal axis are in the third column. I’ve hidden rows in the calculation (at the double-line separator) but if I wanted to extend the graph to include the point where the last column decreases by one pixel, I’d need a width more than thirty times the width of the graph shown below the table.

(The red line is actually curved - but in this magnification- the curvature is not evident, not even on the sloped part.) The difference between the two distributions appears to be large in my magnification but in fact it is 0.00000117 when |x|=√6 ~ 2.44948974. After that, as |x| ⭢ ∞, the difference approaches zero. However, arithmetically, the difference is sufficiently large for so long, for such large numbers of |x|, that the eventual contribution of the tails, in a calculation involving the fourth power of x, is the amount of ~6000 the kurtosis has.

So, when the x-axis is magnified by a factor of 1000 million and the y-axis is magnified by a factor of 500 million, the difference between the distributions is displayed in the graph above as a gap of 585 pixels at x = √6. Reduce the magnification factor of the y-axis to a million and the difference between the two distributions will be a (rounded) single pixel. Something is “rotten” in this “state of Denmark”!

I’m struck by the resemblance of this situation to Zeno’s dichotomy paradox. If you set out on a journey from A to B, you will reach a point when you are halfway between A and B. If you rename that point “A” and set out for B, you will …. Simply put, the paradox is that since one is forever “halfway there”, one is never “there”, at the destination, B.

To see that, indeed, Westfall’s mixture distribution has a kurtosis of ~6000 compared to the original distribution, one would have to view the two distributions, side by side, with x stretching from -∞ to +∞, with a magnification such as shown above.

In the real world, a traveller arrives at B. In the real world, there is no difference between the two “triangular” distributions Westfall offers in his Figures 2 and 3. Saying that the Figure 3 distributions have kurtosis values in excess of 6000 is equivalent to saying “you will never arrive at B”. The mathematical statement is true - but displaying that reality is not possible in the reality of the world we inhabit. Nobody is willing to consider a page a million times larger (in height and in width) that the page you’re reading.

Google a characteristic such as “the distribution of the length of adult males” and you will discover that is is considered to be approximately Gaussian. Why only “approximately”? Well, one issue is that the Gaussian distribution stretches from -∞ to +∞. That means that if you ignore reality, you could call your favourite skyscraper to mind and ask: What proportion of adult males are taller than that skyscraper. The mathematical result is non-zero. If you think that ridiculous, be reminded that every Gaussian distribution extends below zero. Therefore you could ask the mathematical valid question: What proportion of adult males has length less than zero? The calculation would yield a non-zero amount. Both questions (and their answers) are ridiculous because they ignore reality. To be precise, they ignore the fact that mathematics can be used to model (represent) reality but nobody demands that reality should be identical, in all respects, to the chosen mathematical model.

Reality

It seems to me that all the mathematics in the above is unhelpful in understanding what kurtosis measures. In penance, let me try to offer mathematics which, I hope, does help explain kurtosis to a layman. Although I’m sceptical about the usefulness of discrete distributions with a handful of values, I think a distribution with, say, 17 values will be instructive.

As we shall see, there is a huge advantage (arithmetically) in an odd number of values. We shall also suggest that a distribution has three, possibly four areas, the centre, the “shoulders” and the tail - and, beyond the tail, the fourth group, the outliers. That implies a minimum of a 7-value distribution (outlier, tail, shoulder, centre, shoulder, tail, outlier) but I prefer 1+3+3+ [3] +3+3+1 = 17 (where I’ve identified the central count to emphasize it). Please feel free to redo my arithmetic below if you have a different preference. In particular, I offer discussion of a discrete distribution with an even number of values, none of which are zero, in Appendix B.

The crucial point to understand is that the mathematics associated with kurtosis is centred on two restrictions. Given nine z-values, one equal to zero and the others eight occurring as a pair, of a negative and a positive value, so 17 values in total, and nine p-values (the pairs having the same p-value) then these z’s and p’s must satisfy the following restrictions:

1: The probabilities, the p-values, must be between 0 and 1 and they must total to 1.

2: The total of the products of the square of a z-value and it’s p-value must total to 1 - the variance of the distribution must be unity.

To this I add a two more restrictions to define the family of distributions I’m examining:

3. The z-values must be equally spaced.

4. The probabilities, p, for z, must be an decreasing sequence (with equality permissible in limited cases) with increasing |z|.

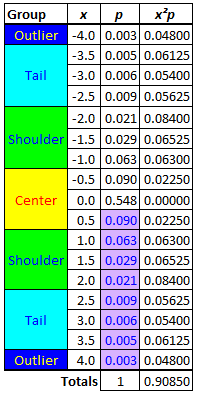

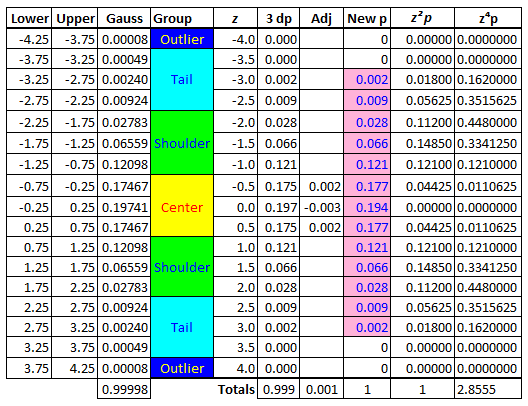

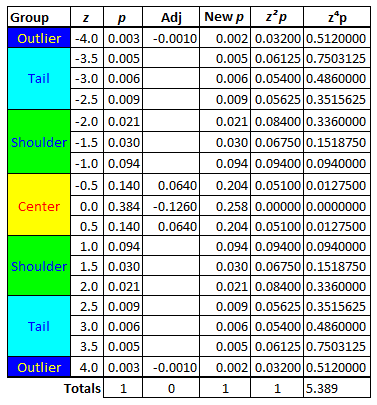

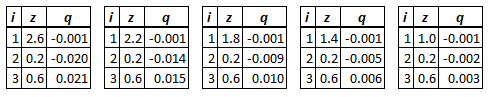

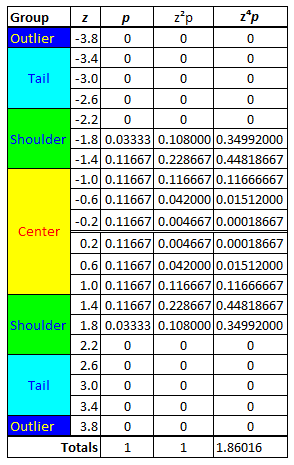

I suggest fixing the z-values, i.e. I suggest using the 9 values from 0 to 4 in steps of 0.5 in the table below, pairing a positive values with a negative one) and varying only p-values to illustrate kurtosis. I also suggest limiting p-values to a few decimal places, the fewer, the better. In the example below I selected eight arbitrary three-digit numbers between 0 and 0.1, arranged them from largest to smallest and entered them, 0.090 … 0.003, into the table below.

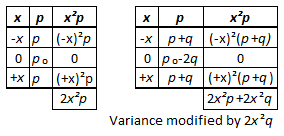

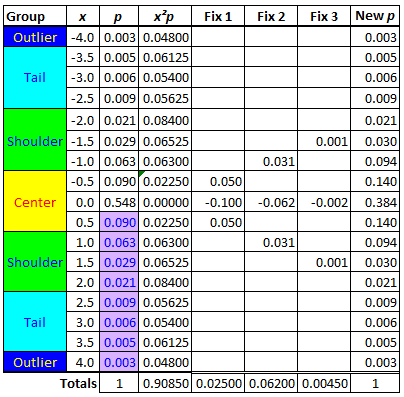

The table is completed by copying the p-value for every positive x (i.e. every x>0) to -x and then setting p₀ equal to one less than twice the sum of the p-values for positive x-values. One is now able to construct the last column in the table above. The variance must be equal to unity for the distribution to be one of a standardized variable (which is why I’m using x for the variable - I’ll change that to z once the variance is equal to unity.) How could one increase the variance from 0.9085 to unity without changing the total of the probabilities (which must be unity)? That’s easy, reduce p₀ (which will have no effect on the variance) and increase the p-value for a non-zero x-value (because this will have an effect on the variance) in such a way as to ensure the total of the p-values remains unchanged. Here’s a “before and after” display, with a statement of the consequences of the change to the p-values.

Using the last formula in the graphic above for three values of x, I found a combination which did what was needed.

The total at the bottom of the columns Fix 1 to Fix 3 is not the total of the column (as it usually is - the column total is obviously zero) but the value of the change to the variance. The total of 0.025+0.062+0.0045 = 0.0915 is the shortfall, 1 - 0.9085.

I hope I’ve made it clear that, starting with an arbitrary set of eight three-digits numbers, all at most 0.100, I was able to construct a member of the family of a 17-value discrete symmetric unimodal distributions with probabilities totalling to unity and a variance of unity.

Perhaps I was lucky in my choice of an initial 8-value set of p-values. I recommend averaging the set of starting numbers and, if the average is greater than 1÷17, look for another set (or modify the starting set) until you find a set with average below the stated limit. In my case, luckily (?), 0.028 < 0.059. Why 1÷17? Because then p₀ = 1-2×[(1÷17)×8] = 1÷17 follows from my third restriction. If your initial set is invalid (p₀ should be greater than or equal to the largest of the numbers of the initial 8-number set) then a simple fix is to ensure you have 4 two-significant-digit values (e.g. 0.063) and 4 one-significant-digit numbers (e.g. 0.003), ensuring an upper bound of 0.054 (< 0.059) to the average of the set.

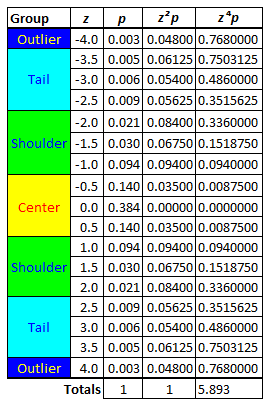

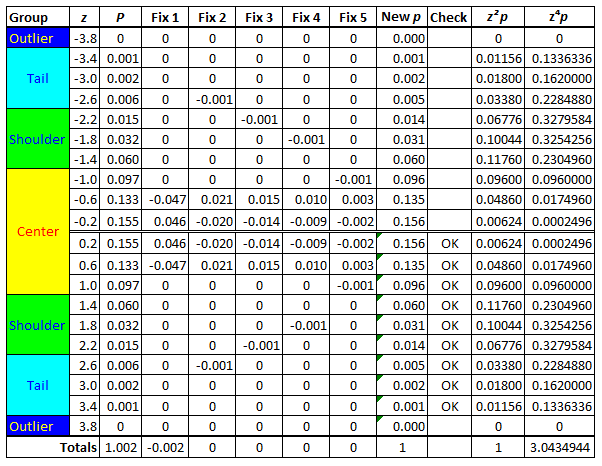

We are now able to add a column, z⁴p, the total of which is the kurtosis.

I was pleased when I discovered that my chosen set of eight initial values resulted in a large number for p₀ because, in my experience, large p₀-values tend to lead to a variance below the target, 1. Fixing that is easy, a correction which will reduce the value of p₀. In this case, 0.548 is reduced to 0.384.

This member of the family is a little too extreme for my liking. The p-value of 0.384 for z=0, compared to 0.140 for |z|=0.5 is begging for accusation of a bias towards peakedness, so, let’s modify this distribution.

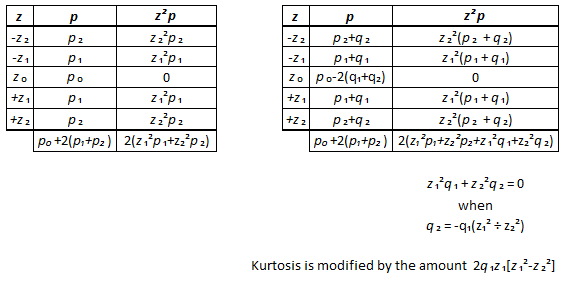

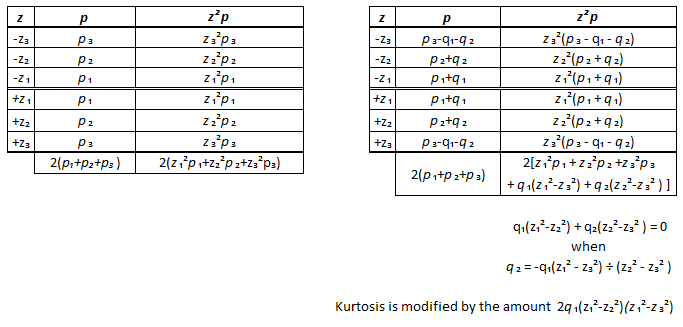

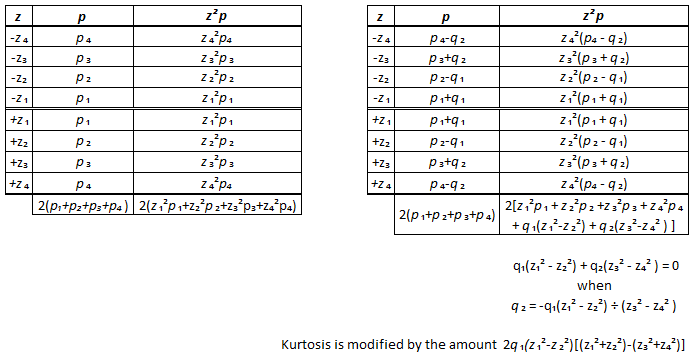

There are at least three methods of modifying this member of the family of distributions which involve modifying only the p-values. These methods require that a value of the (positive or negative) addition q₁ be selected (subject to restrictions). From this choice, q₂ is calculated, and q₃, when present.

The first method includes z = 0.

The second method excludes z = 0 and modifies 3 p-values.

The third method excludes z = 0 and modifies 4 p-values, in two pairs.

When choosing an amount for q₁, it helps to know the absolute value of 4(z₃²-z₄²) - a multiple of the latter, or of selected factors of that number, is a good choice for the former. For example, if z₃=0.5 and z₄=2.5, |4(z₃²-z₄²)|=24=8×3 so choosing q₁ to be an integer multiple of 0.003 will limit the number of decimals in the modified p-values.

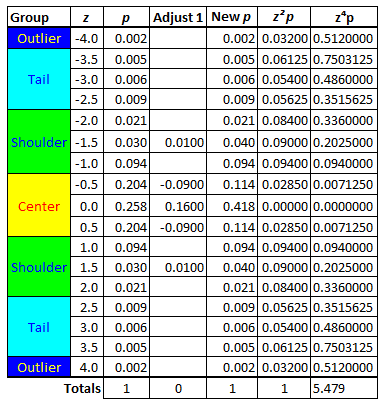

The direct method (selecting a set of eight p-values) is often a better approach to arriving at a desired outcome than attempting to use the methods above to modify an arbitrary initial selection. Selecting values for the eight-value three-decimal-place set which match some preferred outcome and using the techniques described above to complete the distribution to ensure that the restrictions are met will save much time. For example, one might want to create an analogue to the Gaussian/Normal distribution. This is how I chose the initial set. The sixth column was obtained by rounding and making slight adjustments to the third column which was obtained in Excel using the function

NORM.DIST(Upper,0,1,TRUE)-NORM.DIST(Lower,0,1,TRUE)

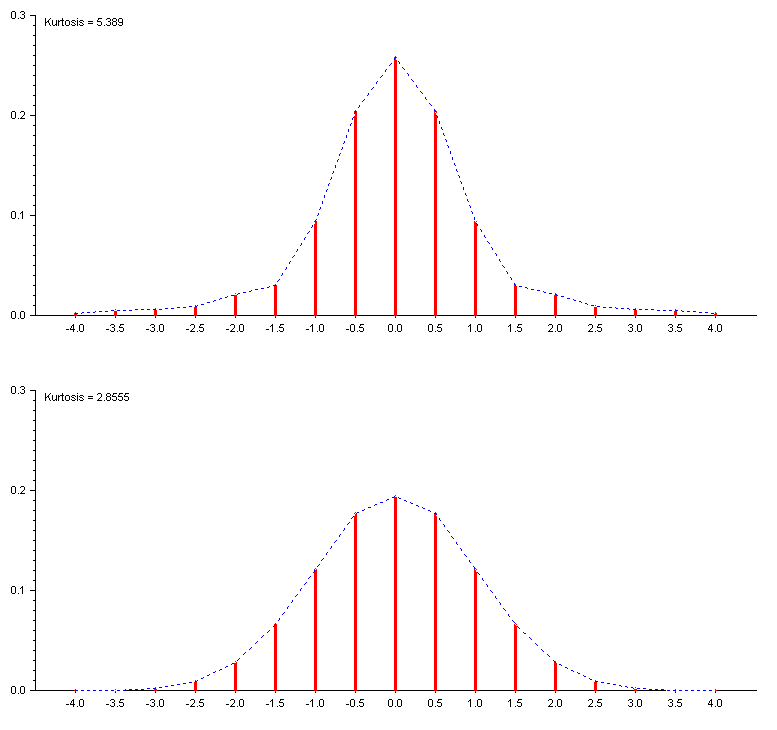

The value for z=0 in the Adj column is equal to 0.001 + (-0.004), the latter arising from the adjustment of the variance to unity. The distribution is displayed graphically in the plot below.

Look for the distribution in the plot labelled with the kurtosis, 2.8555, below. It’s the distribution at the bottom of the display.

Returning to the distribution shown in the first graph of this section, correcting the extremely large value of p for zero requires a large increase for at least one non-zero value of z. That would increase the value the variance by an amount equal to the square of the non-zero values of z affected, multiplied by the change in the p-values of those z-values. To negate this increase, one must decrease the p-values of z-values not yet changed. Crucially, to generate a large differential in the p-value adjustments, one must recognize that z-values less than 1 are associated with relatively small z²p adjustments (for z=0.5, a unit change in p changes z²p by 0.25 units) where-as z-values greater than 1 are associated with relatively large z²p adjustments (for z=4.0, a unit change in p changes z²p by 16 units). So, if the p-value for z=4.0 is decreased by 0.001, the p-value of z=0.5 would have to be increased by 16÷0.25×0.001 = 0.064 and twice the difference (0.064-.001=0.063) would have to be subtracted the p-value of z=0. As follows.

This is the top distribution graphed in the plot below. It’s labelled with its kurtosis, 5.389.

One might feel that the top graph is a little less “smooth” than the bottom graph. Perhaps an improvement would be achieved by increasing the p-value for |z|=1. 5 from its value of 0.030 in the direction of the midpoint of the p-values of |z|=1 and |z|=2, (0.094+0.021)÷2 = 0.0575, an increase of 0.0275. Selecting 0.01 for the increase to |z|=1.5 changes the contribution of this value to the variance by 1.5²×0.01 = 0.0225. A reduction of q to the p-value of |z|=0.5 reduces the variance by 0.5²×q = 0.25×q, so matching the latter to the former requires an reduction of q = 0.0225÷0.25 = 0.09. To keep the total of the p-values equal to unity, without affecting the variance, the p-value for z=0 must be increased by twice the total of the modifications for |z|=1.5 and |z|=0.5, 0.09-0.01=0.08 or 0.16, taking that p-value from 0.258 to 0.418. That change roughly doubles the p-value for |z|=0 compared to the distribution with kurtosis 2.8555. It also makes the distribution more peaked than the distribution I started with, 0.418 being greater than 0.384.

This family of distributions rapidly teaches one that if one “fattens the tails” of a distribution, to increase the kurtosis, the need to ensure a standardized distribution implies one must “thin the body” of the distribution to compensate for the fattening - and the thinning is disproportionate to the fattening because (thinking in terms of a pair of z-values) an increase in the p-value of a |z|>1, call it Z, by an amount q, must be matched by decrease in the p-value of a |z|<1, call is z, equal to (Z²÷z²)×q. This family of distributions allows one to accommodate the total of (Z²÷z²)×q - q by adding that difference to the p-value of z=0 because the variance is unaffected by the change to this p-value. Changing the p-values of |Z|, |z| and 0 by q, -(Z²÷z²)×q and 2×(Z²÷z²)×q-q, respectively, leaves the total of the p-values and the variance unchanged but the kurtosis changes by the amount 2×q×Z²(Z²-z²). In the example above, 2×.01×1.5²(1.5²-0.5²) = 0.09, changing 5.389 to 5.479.

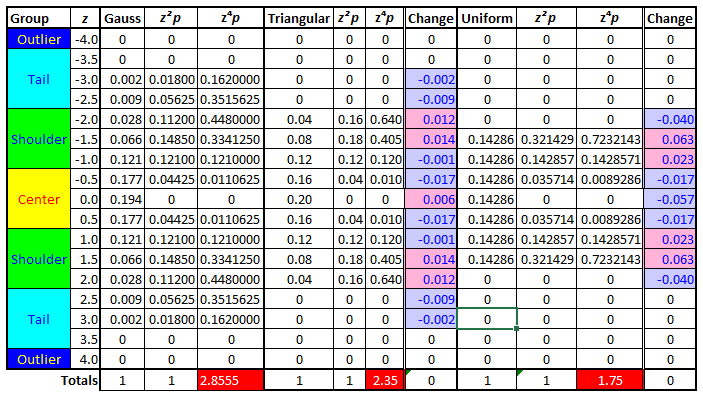

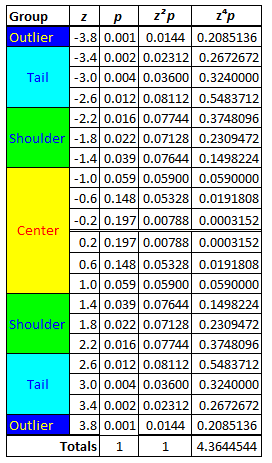

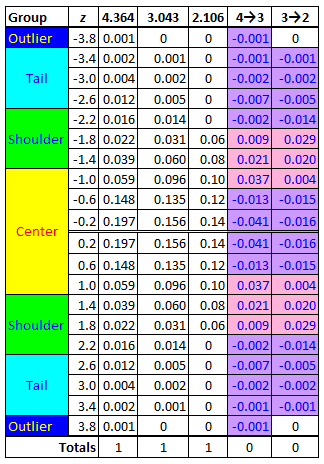

To complete the discussion of this family of distributions, it’s worth displaying the equivalent of the “triangular” and “uniform” distributions in the family. In the following table, the “Change” column is the difference between a distribution and the distribution to the left of it. Note that in the case of the Uniform distribution the convenient restriction of three decimal place p-values had to be relaxed.

The totals, {Tail, Shoulder, Center, Shoulder, Tail} are {-0.011, +0.025, -0.028, +0.025, -0.011} in the change from “Gauss” to ‘Triangular” and {0.0000, 0.0457,-0.0914, 0.0457, 0.0000} in the change from ”Triangular” to “Uniform”. In words, the thinning of the tails in the sequence of these three distribution caused a “fattening” of the shoulders of the distribution in both changes, along with a “deflating” of the Center - a reduction in “peakedness”. These changes in the shape of the distribution occurred despite the fact that the middle distribution might be considered peaked compared to the last distribution which is the epitome of a “flat” distribution (the p-values are all equal to ⅐). It’s misleading to consider a triangular distribution to be peaked - it does not have the tails for a high kurtosis. I’ve included the columns z²p to emphasize that these are the standardized distributions.

As before, the dotted blue lines is meant to be a visual assistant, not the standard representation of discrete distributions - the red lines.

Summary

Statements like “Therefore kurtosis measures outliers only; it measures nothing about the ‘peak’” miss the point. It is true is that, arithmetically, the tails ensure that the kurtosis value is large - but to have tails, one has to have a “thinner” distribution, less in the non-tail region of the distribution. A shift of probability out into the tails of a distribution is not only a “shift to” but also a “shift from”. The shift from may be from the peak, it may not be - but the fact is that there is less probability somewhere, typically, in more than one part of the distribution. I’m objecting to the word “only” in the quoted sentence. That is simply not true.

Appendix A

I strongly recommend that, before you read any further, you examine the statements made in this link, particularly Counterexample 2, which this Appendix addresses.

The distribution under discussion, with both z and p indexed by θ is a six-value discrete distribution, three pairs of values, lets label their absolute or positive values Center, Shoulder and Tail (so -Center, -Shoulder and -Tail complete the list of 6 values). When θ = 1, the p-values for the Tail and Shoulder pairs are zero so the distribution degenerates to Center and -Center (equal to 1 and -1 respectively) with probabilities equal to ½ each. This two-value discrete distribution, the simplest possible discrete uniform distribution is the one defining the smallest value kurtosis can have, 1.

The discussion in the link is about what happens as θ ⭢ 1.

There is no mention of the other extreme for possible values of θ. When θ = 0, Shoulder, -Shoulder and Center are numerically equal - but the probability of Center is 0 so the effect is that the distribution degenerates to a three-value distribution, -√2, 0, +√2 with probabilities ¼, ½ and ¼ respectively. This is the simplest possible example of a triangular distribution - a “peaked” distribution which, because it lacks thick (thickened) tails, has a kurtosis value suggesting platykurtism rather than leptokurtism - in this case the kurtosis is [-√2]⁴·¼ + 0 + [√2]⁴·¼ = 2.

If the smallest possible value for the kurtosis (at the platykurtic end of the scale) is 1 and the Gaussian distribution, with its kurtosis value of 3, is considered mesokurtic, then one might ask, what’s the numerical boundary between mesokurtic and platykurtic? A point halfway between 3 and the lower bound, 1, i.e. (3+1)÷2=2, is a candidate.

As θ ⭢ 0, the kurtosis of the members of this family of distributions tends towards 2, i.e. the possible values of the kurtosis for the (non-degenerate members of the) family are a value greater than 1 but less than 2.

My point is that this counter example is about a family of distributions which is limited to the platykurtic range of kurtosis values. Conclusions based on the behaviour of this family should be extrapolated to the full range of kurtosis values with care. I’ve made similar comments about the first counter example in this link.

It would seem that the 17-value discrete distribution is far more informative than any of the limited 4-value, five value or 6-value families discussed above. The only remaining issue of the inclusion of zero as one of the z-value. This is investigated in the next Appendix.

Appendix B

A discrete distribution with an even-numbered list of values with at least three in the groups labelled Center, Shoulders and Tail and one in the group labelled Outliers suggests at least a 20-value distribution.

To keep a constant increment between the values, if f is the smallest value greater than zero (so that the first value less than zero is -f and the distance between values is 2f) then the next largest value moving away from zero is 3f, then 5f, 7f and so on. The z-value in the Outlier group should be in the region of 3, so a first guess for 2f, the gap between successive numbers, is [(+3)-(-3)]÷20 = 0.3 and hence f = 0.15. In my opinion, a crucial value to include as value is z=1 because this marks the boundary between values where raising to a power results in numerically smaller values than the original numbers and where the result is numerically larger than the original. I’m comparing squaring 0.5 to squaring 2.0. Chosing f = 0.15 yields the sequence 0.15, 0.45, 0.75, 1.05, etc., where-as the choice f = 0.2 yields 0.2, 0.6, 1.0, 1.4, etc. This explains my choice in the examples below - but my choice is not prescriptive.

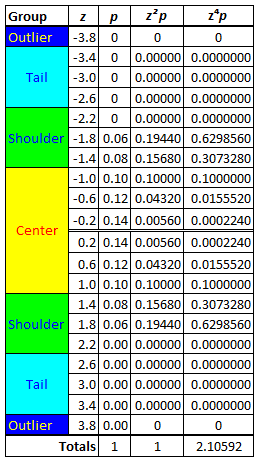

The first step is to make a reference distribution.

Rounding to three decimal places yields a first attempt at the required distribution. Please ignore the fact, for the moment, that the p-values don’t total to unity. Instead, note the labelling. In my opinion, it’s a little more “natural” than the labelling for the odd distribution above. Excel’s NORM.DIST function was used to obtain these initial values. In the last row of the table, the sum of the z²p values and the sum of the z⁴p have been corrected by dividing by 1.002, giving the variance in the former case and the corrected z⁴p sum has been further corrected by dividing with the square of the calculated variance.

The next step is to correct the total of the p-values, as well as the variance. If this is done with the p-values for the pair of z-values closest to zero, 0.2 and 0.6, there will have to be a difference of -0.002 in the modification, an amount of -0.001 either side of zero. Let s be the adjustment for z=0.6 and t be the adjustment for z=0.02. Then s+t=-0.001 implies s=-0.001-t and the change to the z²p values will be s×0.6²+t×0.2² = (-0.001-t)×0.36+t×0.04 = -0.00036-0.32t which must be equal to -0.03016÷2 = -0.01508. Solving for t yields 0.046 and hence s = -0.047.

An alternate approach is to total the p-values and z²p values for the positive z-values other than the pair selected for modification, let these amounts be q and V respectively. The one can solve for the modified values of the p-values of the selected pair.

Having corrected the total of the p-values and the total of the z²p values, one can apply the formulas derived for the case of odd-numbered distributions to modify p-values without affecting the total of the p-values or the total of the z²p values. For example the modification to the p-values of three positive z-values, can be used to test five possible modifications to the distyribution so far.

As you can see, the initial correction changed the distribution drastically - but the subsequent tweeks undid these changes. Compare the columns p and New p. As it turned out the modification using z=1.4 was not needed but the “tweeks” - reducing the p-value of a large value of z (i.e. a value greater than 1) by a mere 0.001 had large effects on the p-values of z=0.2 and z=0.6.

Fattening the tail of the distribution - increasing the p-values in this area of the distribution - which necessarily reduces the p-values in the rest of the distribution - increases the value if the kurtosis.

Thinning the tails has the opposite effect.

Relaxing the convenience of 3 decimal places, one can take the process to an extreme.

We are now in a position to examine the progression of distributions from the leptokurtic end of the kurtosis scale through the mesokurtic portion towards the platykurtic end of the scale.

Reduction in kurtosis occurs because p-values are reduced for z-values in the tails of the distribution. There are two consequences to this. Firstly, the restriction that the total of the p-values must stay unchanged (be = 1) implies that there must be an increase elsewhere in the distribution. This cannot be one-to-one. I mean that the decrease of the p-value for z=3.8 by 0.001 cannot be only, easily, be matched by an increase of the p-value of say z=1.8 by 0.001. This is because the reduction in the p-value of the large z-value reduces the variance by 0.0001×3.8² = 0.054772, so, to keep the sum of the z²p values equal to unity, one needs q×1.8² = 0.054772 or an increase of q = 0.054772÷1.8² ~ 0.017 for z=1.8. To meet both requirements - the sum of the p’s = 1 and the sum of the z²p’s = 1 - a reduction in the p-value of a z in the tails will be matched by changes to the p-values elsewhere in the distribution which total to an amount countering the increase in the tails. In the mathematics displayed in this appendix, we saw this example.

In short, to accommodate a reduction of 0.001 for z=2.6, a “large” reduction for an itermediate-sized value of z (z=0.6) is matched by a sufficiently smaller increase in a smaller value of z (z=0.02). The total of the adjustments, q, is zero - but so is

(2.6)²×(-0.001)+(0.2)²×(-0.020)+(0.6)²×(+0.021) = -0.00676-0.00080+0.00756.

This particular example was chosen to correct an over-correction but the last table above suggests that reductions in the tail tend to be matched by corrections in the shoulders paired with corrections in the center. In short, the shoulders “fatten” and the center “deflates”.

Note the abiguity of the case z=1. In my opinion this value is on the border between the center and shoulders. Sometimes this value contributes to the center, sometimes to the shoulders. The shoulders, in our three examples above, stretch from |z|=1 to somewhere near |z|=2.

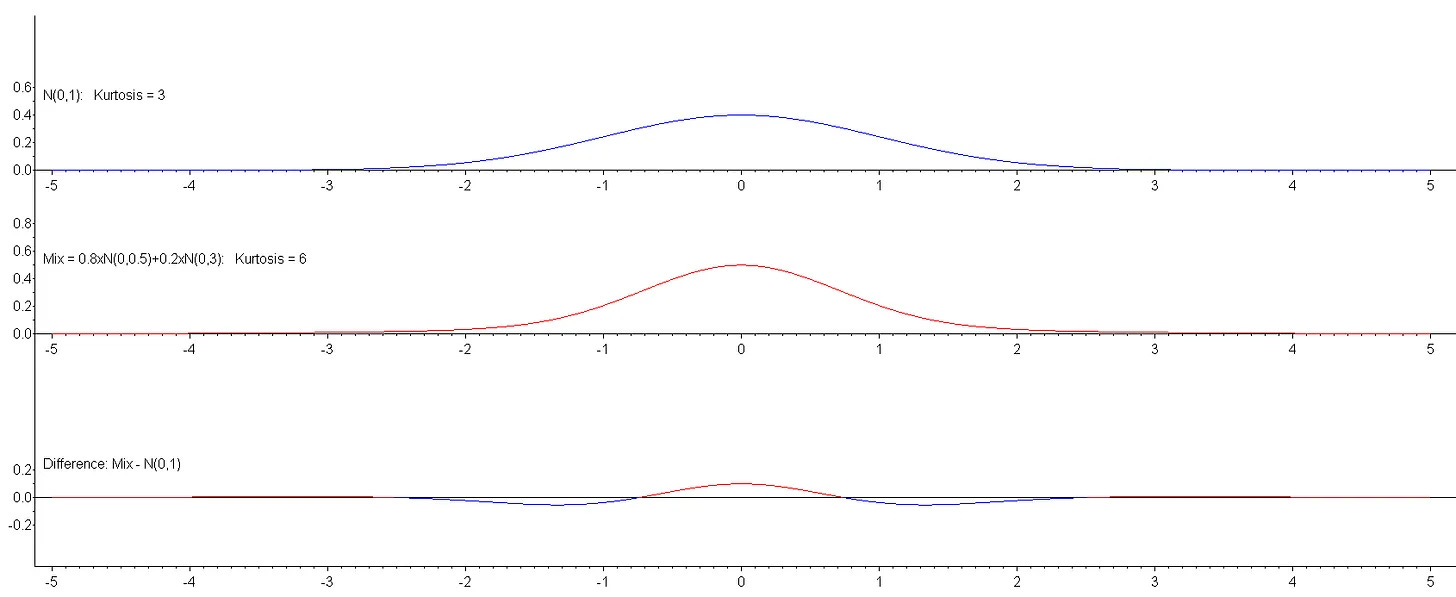

In Appendix B of this post, we saw, in the plot of the difference between two continuous distributions, that the center was around zero, the shoulders are roughly from (just under) 1 to (just over) 2 and the tails are beyond that.

The evidence presented is incontrovertible. Increasing kurtosis modifies the shape of a distribution - and, in a general sense, increased kurtosis results in increased peakedness accompanied by “thinned” shoulders. This is necissitated by the restrictions that the p-values must total unity and that the variance must be equal to unity. Thickening the tails of a distribution is typically not reflected by a change in one other place but in two other places, vaguely decribed as the center and the shoulders. The center is characteristed by z²<|z| while the shoulders are characterised by z²>|z| but with an upper bound to |z| because even a small value for p in z²p will make a large contribution to the variance; p will need to be so small that |z| would be better described as being in the tail of the distribution. This situation is perfectly displayed in the Difference plot in the last graph above